Digital Gauge FAQ

Indication accuracy

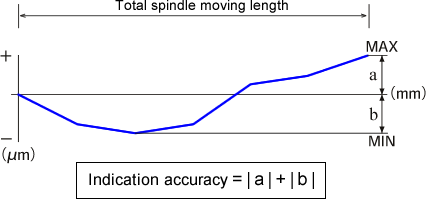

Indication accuracy refers to a measurement error of the linear gauge sensor. An error (difference with a true value) is measured for each specified measurement value and the sum of the absolute values of the maximum error in the plus direction and the maximum error in the minus direction in terms of the total spindle moving length is defined as the indication accuracy of the sensor.

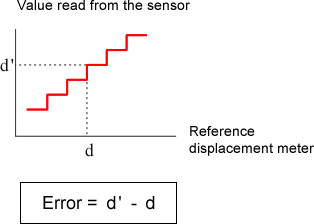

Accuracy is measured by comparison with the displacement meter, which is used as the reference. An error refers to the difference between the value and the value indicated by the reference displacement meter when the least significant digit of the sensor changes. For this reason, the indication accuracy of the sensor of resolution 10 µm is 3 µm, which is lower than that of resolution.

Resolution

Resolution refers to a minimum read value of the linear gauge sensor. For instance, the minimum read value of linear gauge sensor GS-1530A is 10 µm.

Revised:2009/03/16